WELCOME TO THE ERA OF AI.

Finding the insights hidden in oceans of data can transform entire industries, from personalized cancer therapy to helping virtual personal assistants converse naturally and predicting the next big hurricane.

NVIDIA® V100 Tensor Core is the most advanced data center GPU ever built to accelerate AI, high performance computing (HPC), data science and graphics. It’s powered by NVIDIA Volta architecture, comes in 16 and 32GB configurations, and offers the performance of up to 32 CPUs in a single GPU. Data scientists, researchers, and engineers can now spend less time optimizing memory usage and more time designing the next AI breakthrough.

PRODUCT COMPARISON TESLA

PRODUCT | KEY FEATURES | RECOMMENDED SERVER |

|---|---|---|

TESLA V100 MIT NVLINK™Deep-Learning-Training 3x schnelleres Deep-Learning-Training im Vergleich zu P100-GPUs der letzten Generation |

| Up to 4x V100 NVlink GPUs Bis zu 8x V100 NVlink GPUs

|

TESLA T4Deep Learning Inferencing 60x higher energy efficiency than a CPU for inference |

| 1-20 GPUs pro node

|

Run AI and HPC workloads in a virtual environment

for better security and manageability using NVIDIA Virtual Compute Server (vCS) software

AI TRAINING

Speech recognition. Train virtual personal assistants to communicate naturally. Recognise lanes so that cars can drive autonomously. These are just a few examples of the increasingly complex challenges that data scientists face with the help of AI. To solve such problems, much more complex deep-learning models need to be trained in a reasonable amount of time.

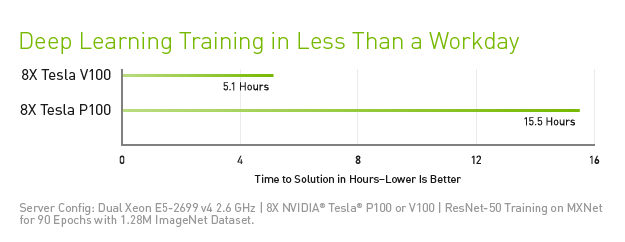

With 43,000 tensor compute units, the Tesla V100 is the world's first GPU to break the 100-TOPS barrier in deep learning performance. Second-generation NVIDIA NVLink™ connects multiple Tesla V100 GPUs with transfer speeds up to 160 GB/s. This creates the world's most powerful computing servers. AI models that would consume the computing resources of previous systems for several weeks can now be trained within a few days. Thanks to the considerably shorter training times, AI now offers itself as a solution for significantly more problems.

KI-INFERENCE

To give us access to the most relevant information, services and products, hyperscale companies have started to use AI. But meeting user demands at all times is not easy. For example, the world's largest hyperscale companies estimate that they would have to double their data centre capacity if each user used their voice recognition service for just three minutes a day.

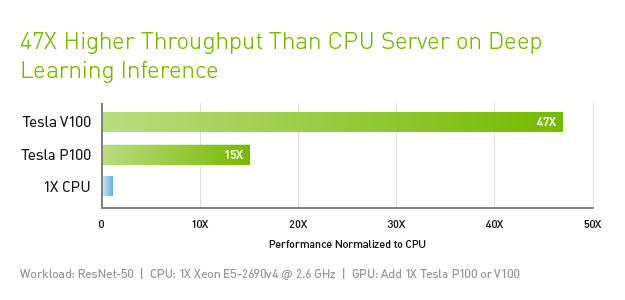

The Tesla V100 for Hyperscale is designed for maximum performance in existing hyperscale server racks. Thanks to AI performance, a 13 kW server rack with Tesla V100 GPUs offers the same performance for deep learning inference as 30 CPU server racks. This huge advance in throughput and efficiency means that scaling up AI services makes sense.

HIGH PERFORMANCE COMPUTING (HPC)

HPC is one of the pillars of modern science. From weather forecasting to drug development to the discovery of new energy sources, researchers in many fields use huge computer systems for simulations and predictions. AI expands the possibilities of traditional HPC. This is because researchers are able to analyse huge amounts of data and extract valuable information from it, whereas simulations alone cannot be used to make complete forecasts of real-world developments.

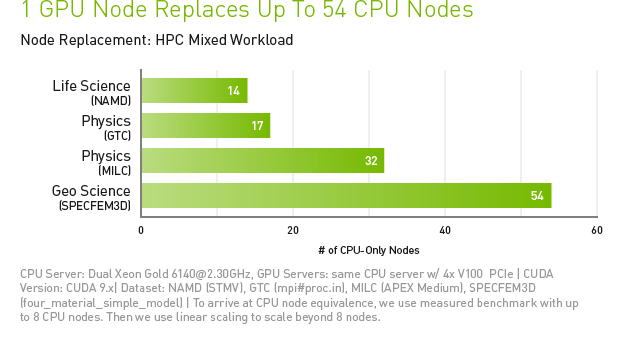

The Tesla V100 is designed for the convergence of HPC and AI. It lends itself as a platform for HPC systems used in computer science for scientific simulations and in data science for identifying valuable information in data. By combining CUDA and Tensor compute units within a unified architecture, a single server with Tesla V100 GPUs can replace hundreds of standard CPU-only servers for traditional HPC and AI workloads. Now every researcher and engineer can afford an AI supercomputer to help them tackle their biggest challenges.

GRAPHICS PROCESSORS FOR DATA CENTRES

NVIDIA TESLA V100 for NVLINK

Ultimate performance for Deep Learning

NVIDIA TESLA V100 For PCIe

Optimal versatility for all workloads, designed for HPC

NVIDIA TESLA V100 SPECIFICATIONS

Tesla V100s for PCIe |

|---|

DOUBLE PRECISION 8.2 TeraFLOPS SIMPLE ACCURACY 16.4 TeraFLOPS DEEP LEARNING 130 TeraFLOPS |

Tesla V100 for PCIe |

|---|

DOUBLE PRECISION 7 TeraFLOPS SIMPLE ACCURACY 14 TeraFLOPS DEEP LEARNING 112 TeraFLOPS |

Tesla V100 for NVLink |

|---|

DOUBLE PRECISION 7.8 TeraFLOPS SIMPLE ACCURACY 15.7 TeraFLOPS DEEP LEARNING 125 TeraFLOPS |

PERFORMANCE with NVIDIA GPU Boost™ |

PCIE 32 GB/s |

PCIE 32 GB/s |

NVLINK 300 GB/s |

CONNECTION BANDWIDTH Bidirectional |

CAPACITY 32 GB HBM2 BANDWIDTH 1134 GB/s |

CAPACITY 32/16 GB HBM2 BANDWIDTH 900 GB/s |

STORAGE CoWoS-HBM2 stacked storage unit |

250 WATT |

|

PERFORMANCE Max. Consumption |