Compact power for the future

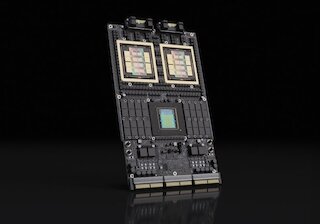

Supermicro unveiled new WIO servers featuring AMD EPYC™ 8005 processors at MWC 2026GTC 2026 Keynote Recap: The Most Relevant AI News Agentic AI, new hardware, and the "5-Layer Cake" model – the key NVIDIA announcements for business practice, compactly summarized.