NVIDIA Isaac GR00T

The future of humanoid robotics

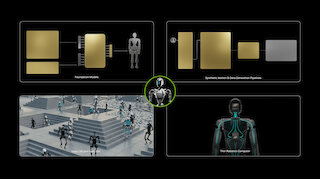

The future of robotics is here! NVIDIA Isaac #GR00T revolutionizes the development of humanoid robots with AI-powered foundation models, simulation tools and powerful edge computing hardware.

In unserem News-Blog halten wir Sie stets auf dem Laufenden über die neuesten Entwicklungen und Trends in der IT-Welt. Hier finden Sie aktuelle Informationen zu unseren innovativen Lösungen und Dienstleistungen, spannende Einblicke in Projekte und Erfolge sowie wertvolle Tipps rund um IT-Infrastrukturen, Cloud-Lösungen, High-Performance-Computing und mehr.

Bei sysGen legen wir großen Wert darauf, Ihnen nicht nur erstklassige Produkte und Dienstleistungen zu bieten, sondern auch umfassende Informationen und Insights, die Ihnen helfen, Ihr Unternehmen voranzubringen. Unser Blog ist Ihre Anlaufstelle für fachkundige Beratung, inspirierende Erfolgsgeschichten und praktische Anleitungen.

The future of robotics is here! NVIDIA Isaac #GR00T revolutionizes the development of humanoid robots with AI-powered foundation models, simulation tools and powerful edge computing hardware.

With the new DGX Spark and DGX Station, NVIDIA brings the power of data centre AI to the desktop - compact, powerful and cloud-ready. Thanks to Grace Blackwell architecture, up to 1,000 trillion AI operations per second (TOPS) and seamless scaling to the cloud, these supercomputers are revolutionising AI development for researchers, developers and companies.

Under the motto ‘AI is advancing at an incredible pace’, Huang unveiled a number of developments that have the potential to fundamentally change gaming, autonomous vehicles, robotics and AI for everyday life. The announcements include: NVIDIA Cosmos, GeForce RTX 50 Series, AI Foundation Models, Project Digits and ...

Under the motto ‘AI is advancing at an incredible pace’, Huang unveiled a number of developments that have the potential to fundamentally change gaming, autonomous vehicles, robotics and AI for everyday life. The announcements include: NVIDIA Cosmos, GeForce RTX 50 Series, AI Foundation Models, Project Digits and ...

NVIDIA Isaac Sim and OpenUSD enable realistic robotics simulations, accelerate AI development and reduce costs. Virtual test scenarios make complex applications safe and efficient.Tags: 3D | Into the Omniverse | NVIDIA Isaac Sim | Omniverse | Robotics | Synthetic Data Generation | Universal Scene Description

Erfahren Sie mehr über unsere Dienstleistungen und wie wir Ihr Unternehmen unterstützen können.

Kontaktieren Sie uns für eine individuelle Beratung!