RECORD PERFORMANCE IN NETWORK COMMUNICATION

DATA SPEED

IMPROVED PERFORMANCE

IMPROVED TOTAL COST OF OWNERSHIP

with 2,048 NDR connections

READY FOR EXascale

Higher scalability

with three jumps (Dragonfly+)

ACCELERATEDDEEP

LEARNING

LEARNING

More AI acceleration

Computing Technology

PERFORMANCE WITH GREAT EFFECT

IMPROVEMENTS FOR SUPERCOMPUTERS AND

APPLICATIONS IN THE FIELDS OF HPC AND KI

Accelerated in-network computing

Performance Isolation

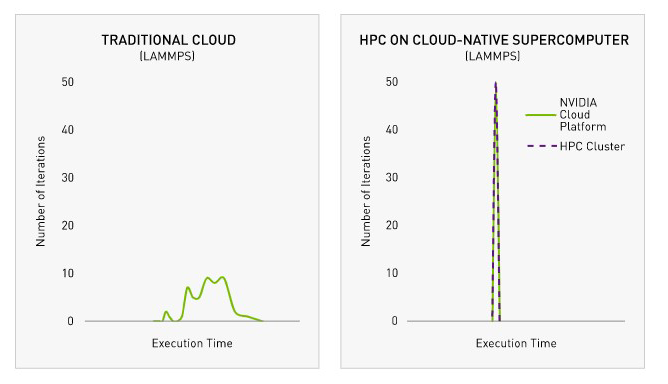

Cloud-native supercomputing

DELIVERING DATA AT THE SPEED OF LIGHT

Host Channel Adapter

The NVIDIA ConnectX-7 InfiniBand host channel adapter (HCA) with support for fourth- and fifth-generation PCIe is available in a variety of form factors and offers single or dual 400 Gb/s network ports.

The ConnectX-7 InfiniBand HCAs include advanced in-network computing capabilities and also additional programmable engines that enable pre-processing of data algorithms and offload application control paths to the network.

Switches with fixed configuration

NVIDIA Quantum-2 proprietary fixed-configuration switches feature 64 ports of 400 Gb/s or 128 ports of 200 Gb/s on physical 32 octal small form-factor (OSFP) ports. The compact 1U switch design is available in air-cooled and liquid-cooled versions that are internally or externally managed.

The NVIDIA Quantum-2 proprietary fixed-configuration switches provide aggregate bi-directional throughput of 51.2 terabits per second (Tb/s) and capacity of more than 66.5 billion packets per second.

Modular switches

NVIDIA Quantum-2 proprietary modular switches provide the following port configurations:

- 2,048 ports at 400 Gb/s or 4,096 ports at 200 Gb/s

- 1,024 ports at 400 Gb/s or 2,048 ports at 200 Gb/s

- 512 ports at 400 Gb/s or 1,024 ports at 200 Gb/s

The largest modular switch has a total bi-directional throughput of 1.64 petabits per second, 5 times higher than the previous generation NVIDIA Quantum InfiniBand modular switch.

Transceivers and cables

NVIDIA Quantum-2 connectivity options provide maximum flexibility in building the topology of your choice. They include a variety of transceivers and multi-fiber push-on (MPO) connectors, active copper cables (ACCs), and direct-attach cables (DACs) with 1-2 or 1-4 splitting options.

Backward compatibility is also provided to connect new 400 Gb/s clusters to existing 200 Gb/s or 100 Gb/s-based infrastructures.