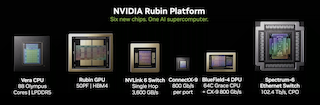

The world's first AI supercomputer ecosystem for models with trillions of parameters

Experience the next step in the evolution of artificial intelligence with the NVIDIA Rubin platform. Through the intensive co-design of six specialised chips, Rubin delivers up to 10 times lower inference latency and sets new standards in the efficiency of AI factories.

The NVIDIA Rubin platform is not just a new generation of GPUs, but a fully integrated architecture designed for the era of ‘Agentic AI’. By seamlessly combining computing power, connectivity and memory, Rubin enables the running of models with over 50 trillion parameters while drastically reducing operating costs and energy consumption.

Agentic AI & Autonomous Systems: Optimised for AI agents that perform complex chains of reasoning in real time.

Large-Context Reasoning: Ideal for applications with massive context windows, such as the analysis of entire legal texts or code repositories.

Enterprise AI Factories: Scalable infrastructure for hyperscalers and enterprises training their own Mixture-of-Experts (MoE) models.

Scientific simulations: Extreme computing power for climate research, genomics and materials science.

Technical comparison

The following comparison illustrates the technological leap from the current Blackwell architecture to the new Vera Rubin platform. Whilst Blackwell laid the foundations for multimodal AI, Rubin is specifically optimised to push the boundaries of agentic reasoning. By utilising 3nm manufacturing processes and HBM4 memory technology, we are achieving a new level of data throughput and energy efficiency per computational operation.

| Feature | NVIDIA Rubin | NVIDIA Blackwell (B200) |

|---|---|---|

| Architectural Focus | Agentic Reasoning / MoE | Video / Multimodal |

| Manufacturing process | TSMC 3nm | TSMC 4nm (Multi-Die) |

| Memory technology | HBM4 (up to 288GB) | HBM3e (up to 192GB) |

| Memory bandwidth | Up to 22 TB/s | Up to 8 TB/s |

| Interconnect | NVLink 6 (3.6 TB/s) | NVLink 5 (1.8 TB/s) |

| Model size (support) | 50T+ parameters | 10T parameters |

| Cooling | 100% liquid immersion | Liquid / Air |

Vera CPU

The Vera CPU uses 88 specialised Olympus cores to orchestrate data streams and control flows. It is the brain that ensures the GPUs in an AI factory never run idle.

Rubin GPU

With massive FP4 performance (50 petaflops) and the integration of HBM4, the Rubin GPU is specifically designed for next-generation transformer workloads that require unprecedented memory bandwidth.

NVLink 6

The sixth generation of the NVLink switch acts as a local nervous system. With 3.6 TB/s of bandwidth, connected GPUs operate as a single, massive processor.

BlueField-4 DPU

A technological highlight: the dual-die package combines a 64-core Grace CPU with a ConnectX-9 chip. This enables the AI factory to be operated securely and data flows to be managed with a high degree of efficiency.

ConnectX-9

The NVIDIA ConnectX-9 is a high-performance network interface for endpoints. With a throughput of 800 Gb/s per port, it delivers the low latency required to move data efficiently between the compute nodes of the AI factory. It forms the backbone for ‘scale-out’, i.e. the interconnection of thousands of GPUs into a cohesive system.

Spectrum-6 Ethernet Switch

The Spectrum-6 is the world’s first Ethernet switch to rely exclusively on co-packaged optics (CPO). With a massive total capacity of 102.4 Tb/s, it enables massive scaling of the network infrastructure. The direct integration of optical components not only drastically increases energy efficiency but also maximises reliability in large-scale data centre installations.

Do you need more? Matching hardware for AI training

Our high-performance storage solutions offer high capacity and speed to efficiently store and retrieve large amounts of data. Perfect for the requirements of big data and machine learning.

Our workstations are specially developed to fulfil the demanding requirements of AI training. They offer outstanding computing power. Perfect for research and development, our workstations support a wide range of AI applications. With state-of-the-art hardware and flexible configuration options, we ensure that your AI projects can be successfully realised.

Our inference hardware offers the necessary computing capacity to execute AI models in real time. Ideal for applications such as autonomous vehicles, image processing and voice control.

First step Contact sysGen

- How are NVIDIA DGX systems optimised for AI training?

NVIDIA DGX systems are specially designed for deep learning training. They combine powerful GPUs, such as the NVIDIA A100 and H100, which are designed for extensive parallel data processing. This makes them particularly efficient for training complex AI models with large amounts of data.

- What are the benefits of Supermicro HGX platforms for AI training?

Supermicro HGX platforms offer customised solutions for AI training with high-density GPU integration and excellent cooling to handle the thermal requirements of powerful GPUs. These systems are designed to minimise latency and maximise computing power for intensive AI training.

- What should be considered when selecting an AI training server?

When selecting an AI training server, the specific requirements of the AI model, such as data volume, model complexity and desired training time, should be taken into account. In addition, factors such as system scalability, GPU performance and the possibility of integration into existing infrastructures are crucial.

- Can DGX and HGX servers be scaled for training tasks?

Yes, both NVIDIA DGX and Supermicro HGX platforms support scalable architectures that allow the addition of more units to keep pace with the demands of growing AI projects. This makes it easier to train larger models or run multiple trainings in parallel.