THE CHALLENGE OF SCALING ENTERPRISE AI

Every business needs to transform itself using artificial intelligence (AI), not just to survive, but to thrive in tough times. However, the enterprise needs an AI infrastructure platform that improves on traditional approaches that in the past included slow computing architectures separated by analytics, training and inference workloads. The old approach introduced complexity, drove up costs, limited scalability and was not ready for modern AI. Enterprises, developers, data scientists, and researchers need a new platform that unifies all AI workloads, simplifies infrastructure, and accelerates ROI.

Product Name XYZ - Mostly Longer than short

Product Name XYZ - Mostly Longer than shortEditable editable, click me for edit, editable, click me for edit, editable, click me for edit ...

This could be a description of a product, maybe you want to have that but it will be shortened to a certain amount of charachters ...

9.999,99 €*

THE UNIVERSAL SYSTEM FOR ALL KI WORKLOADS

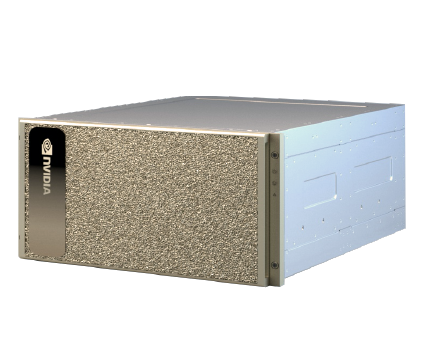

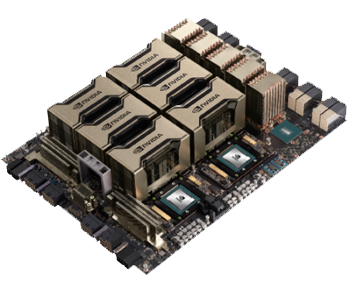

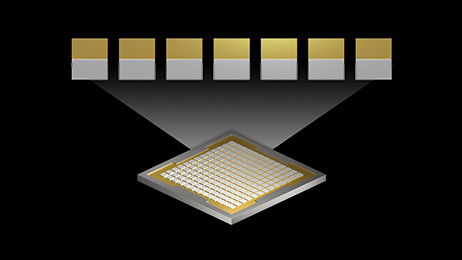

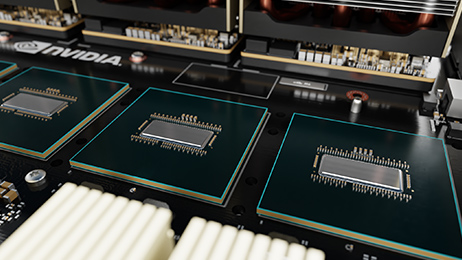

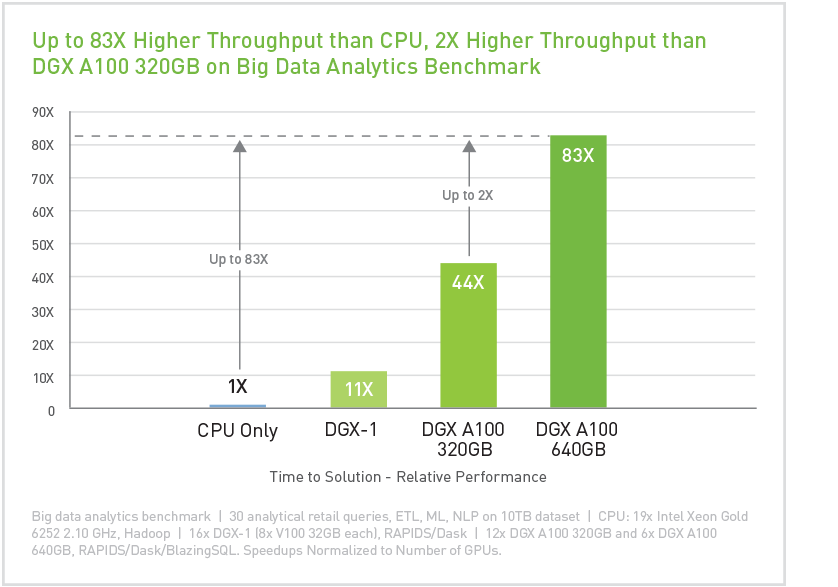

NVIDIA DGX™ A100 is the universal system for all AI workloads - from analytics to training to inference. The DGX A100 sets new standards for compute density, packing 5 petaFLOPS of AI performance into a 6U form factor and replacing legacy compute infrastructure with a single, unified system. The DGX A100 also provides the unprecedented ability to allocate compute power in a fine-grained manner by leveraging the Multi-Instance GPU (MIG) feature of the NVIDIA A100 Tensor Core GPU, which enables administrators to allocate resources that are right-sized for specific workloads.

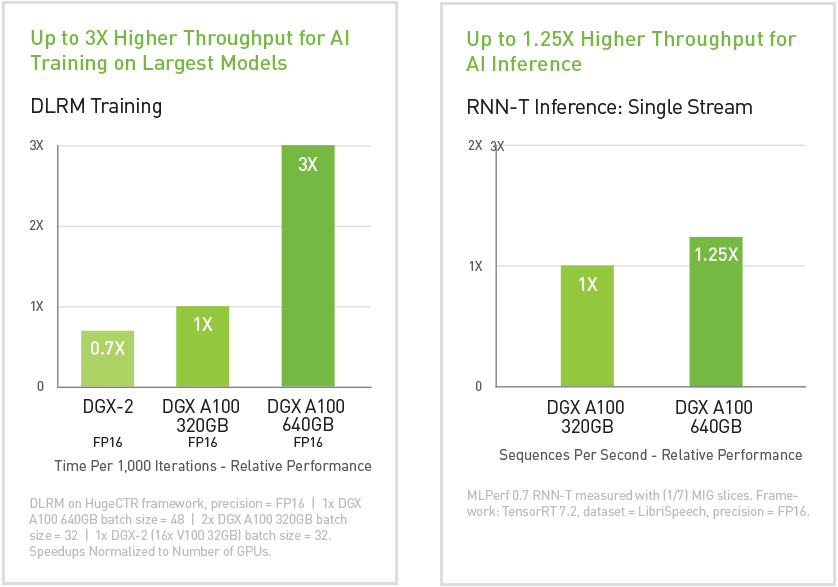

The DGX A100 is available with up to 640 gigabytes (GB) of total GPU memory, which increases performance by up to three times for large training jobs and doubles the size of MIG instances. This allows the DGX A100 to handle the largest and most complex jobs as well as the simplest and smallest. The DGX A100 runs the DGX software stack and optimized software from NGC. The combination of dense compute performance and complete workload flexibility makes the DGX A100 an ideal choice for both single-node deployments and large-scale Slurm and Kubernetes clusters deployed with NVIDIA DeepOps.

IT DEPLOYMENT SERVICES AVAILABLE

Want to shorten time to insights and accelerate ROI from AI? Let our professional IT team accelerate, deploy and integrate the world's first 5 petaFLOPS AI system, NVIDIA® DGX™ A100, seamlessly and non-disruptively into your infrastructure with 24/7 support.

Get the results and outcomes you need:

- Site analysis, readiness, pre-testing and staging

- Access to dedicated engineers, solution architects and support technicians

- Deployment planning, scheduling and project management

- Shipping, logistics management and inventory provisioning

- On-site installation, on-site or remote configuration of software

- Post-deployment check-up, support, ticketing, and maintenance

- Lifecycle management including design, upgrades, recovery, repair and disposal

- Rack and stack and integration services and multi-site deployment

- Customized break-fix and managed service contracts