NVIDIA GTC 2026 Keynote

NVIDIA läutet das Zeitalter der AI Factories ein – Die Highlights im Überblick

This year’s NVIDIA GTC keynote was far more than just a product showcase – it served as a strategic roadmap for the future of AI in business. Jensen Huang painted a picture of a new era in which AI no longer merely communicates, but acts autonomously, solves complex problems and prepares decisions.

The message is clear: AI is becoming the core infrastructure of every business. The technologies presented are no longer experiments for research laboratories, but production-ready tools that will fundamentally transform everyday business operations.

This gives rise to four key areas of focus for your practice:

- Agent-based AI is becoming a reality: with NeMo and OpenShell, you can now launch your first pilot projects – securely, in a controlled manner, and with a direct link to your business processes.

- The infrastructure is becoming scalable: from the compact DGX Spark for agile teams to the high-performance, liquid-cooled AI Factory – there is a suitable solution for every scale.

- Edge and embedded systems are gaining in importance: if AI is to operate in the real world, it requires robust, secure and low-latency platforms – this is precisely where the new embedded solutions come in.

- Data becomes a strategic advantage: the new storage architectures make your data assets truly AI-ready, transforming them from mere repositories of information into intelligent capital.

Here are the most relevant announcements for all companies looking to put AI to productive use now.

Perhaps the most significant announcement concerns agent-based AI. Over the past few months, OpenClaw – a platform for AI agents capable of independently planning and executing tasks – has gained rapid traction.

The problem: OpenClaw on its own was often too risky for businesses – there were security vulnerabilities and no clear governance structures.

This is where NVIDIA NemoClaw comes in – an open-source stack that extends OpenClaw with the OpenShell runtime. OpenShell creates an isolated sandbox environment that controls when and how agents access sensitive data.

What this means for businesses:

- For the first time, businesses can develop and deploy agent-based AI systems – with controlled access to internal data.

- The agents run locally – on the organisation’s own hardware – and do not leave the corporate network.

- The framework is hardware-agnostic but optimised for NVIDIA platforms.

For regulated sectors such as finance, healthcare or the public sector, this is a game-changer. At last, there is a framework that combines the power of open AI agents with the necessary security.

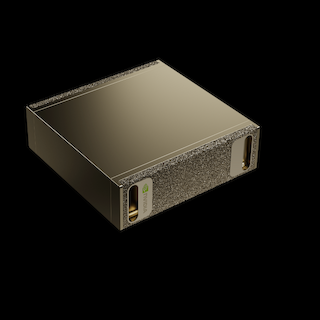

DGX Spark: The personal AI factory

The NVIDIA DGX Spark brings supercomputer performance to your desk. Powered by the GB10 Grace Blackwell superchip, it delivers up to 1 petaFLOP of AI performance and can run models with up to 200 billion parameters locally.

Particularly exciting: up to four DGX Spark units can now be connected to form a cluster – a ‘desktop data centre’ for development teams.

Practical relevance:

- Developers and data scientists gain an environment in which they can develop and test AI models locally – without cloud costs and without data privacy risks.

- With the NVIDIA AI software stack pre-installed and support for tools such as Ollama, LM Studio and vLLM, you can get up and running straight away.

- Perfect for initial experiments with agent-based AI – the agents developed can later be seamlessly migrated to larger environments.

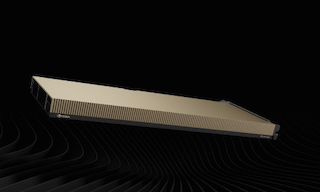

RTX PRO 4500 Blackwell Server Edition

NVIDIA has unveiled the RTX PRO 4500 Blackwell Server Edition for production use in data centres or at the edge. In a compact single-slot form factor (165 watts), it delivers:

- Up to 100x the performance for vision AI compared to CPU servers

- Up to 50x performance for vector databases

- 10x better performance for small language models compared to the previous generation

What makes it special: The card supports vGPU 20.0 and Multi-Instance GPU (MIG) – ideal for virtualised environments and flexible resource allocation.

For business applications, this means:

- Powerful AI accelerators for existing data centres – without the need to completely overhaul the infrastructure.

- Ideal for edge scenarios where space and cooling are limited.

- Perfectly combinable with modern storage solutions for end-to-end AI pipelines.

Looking ahead: With the Vera Rubin platform, NVIDIA has unveiled the next generation of AI infrastructure. The fully liquid-cooled rack architecture combines 72 Rubin GPUs and 36 Vera CPUs in a single system and promises 10 times the performance per watt.

For companies planning for the long term, this is an important consideration: the technology remains scalable – those who invest in the right platforms today will be able to easily expand tomorrow.

For those interested in robotics, autonomous systems or industrial control, there was also some important news:

- NVIDIA IGX Thor is now generally available – an industrial-grade platform for real-time AI at the edge, with functional safety for medical and industrial applications.

- The Cosmos world models and the Newton physics engine (developed with Google DeepMind and Disney) enable robots to understand physical relationships and perform realistic simulations.

Autonomous systems in manufacturing, logistics or space travel are thus provided with a powerful, secure platform – and simulation and reality are converging ever more closely.

No data, no AI. The NVIDIA BlueField-4 STX storage architecture brings computing power closer to the data and eliminates the bottlenecks that often arise with large AI workloads.

Together with partners such as DDN, NetApp, VAST Data and WEKA, NVIDIA is creating end-to-end solutions that offer everything from a single source, from data collection to model deployment.